AI Summary of Peer-Reviewed Research

This page presents an AI-generated summary of a published research paper. The original authors did not write or review this article. See full disclosure ↓

Publication Signals show what we were able to verify about where this research was published.STRONGWe verified multiple publication signals for this source, including independently confirmed credentials. Publication Signals reflect the source’s verifiable credentials, not the quality of the research.

- ✔ Peer-reviewed source

- ✔ Published in indexed journal

- ✔ No retraction or integrity flags

Key findings from this study

This research indicates that:

- Pre-course aptitude testing integrating mental models, background factors, and confidence levels can predict introductory programming assessment outcomes with moderate reliability for identifying at-risk students.

- A Random Forest Regressor exhibited superior generalization compared to a classification approach, demonstrating consistent performance between training and test data with minimal overfitting.

- Class imbalance and limited sample size contributed to performance degradation in the classifier but less so in the regressor, indicating algorithm selection influences model robustness.

Overview

This investigation examined the predictive capacity of pre-course aptitude testing for identifying first-year computer science students who require additional support in introductory programming. The aptitude test integrated students' background information, prior experiences, self-reported confidence levels, and mental models of core programming concepts. Data from 285 undergraduate students were used to develop and validate machine learning models capable of predicting first assessment performance.

Methods and approach

The study employed a custom-developed aptitude test administered before the programming module commenced. The test captured demographic and prior experience data, perceived confidence, and conceptual understanding across fundamental programming topics. Multiple regression and classification algorithms were trained on the collected data, with model selection focusing on Random Forest approaches. Sequential Feature Selection refined the selected models, which underwent validation against a held-out test set to evaluate generalizability.

Results

The Random Forest Classifier achieved training performance of AUC = 0.8688, F1 = 0.8353, and accuracy = 0.7450. Test set performance declined to AUC = 0.7670, F1 = 0.7020, and accuracy = 0.7020, indicating moderate overfitting likely attributable to class imbalance and limited sample size. The Random Forest Regressor demonstrated more consistent performance, with training RMSE = 0.1616 and MAE = 0.1209 versus test RMSE = 0.1713 and MAE = 0.1396, suggesting minimal overfitting despite notable prediction error margins. The regressor showed greater potential for practical application in identifying students requiring support.

Implications

Early identification of at-risk students enables timely intervention design and resource allocation in introductory programming instruction. The predictive framework combines multiple factor categories—background, confidence, and conceptual readiness—offering a more comprehensive screening approach than single-dimension assessments. Integration of such testing into module onboarding creates opportunities for targeted pedagogical interventions matched to student need profiles. Future work should address data imbalance and expand sample sizes to improve model robustness and generalizability across institutional contexts.

Scope and limitations

This summary is based on the study abstract and available metadata. It does not include a full analysis of the complete paper, supplementary materials, or underlying datasets unless explicitly stated. Findings should be interpreted in the context of the original publication.

Disclosure

- Research title: Harnessing Pre-Course Aptitude Tests to Predict Performance on an Introductory Programming Assessment in Higher Education

- Authors: Oliver Kerr, Linden J. Ball, Nicky Danino

- Institutions: Leeds Trinity University, University of Lancashire

- Publication date: 2026-03-30

- DOI: https://doi.org/10.1145/3806059

- OpenAlex record: View

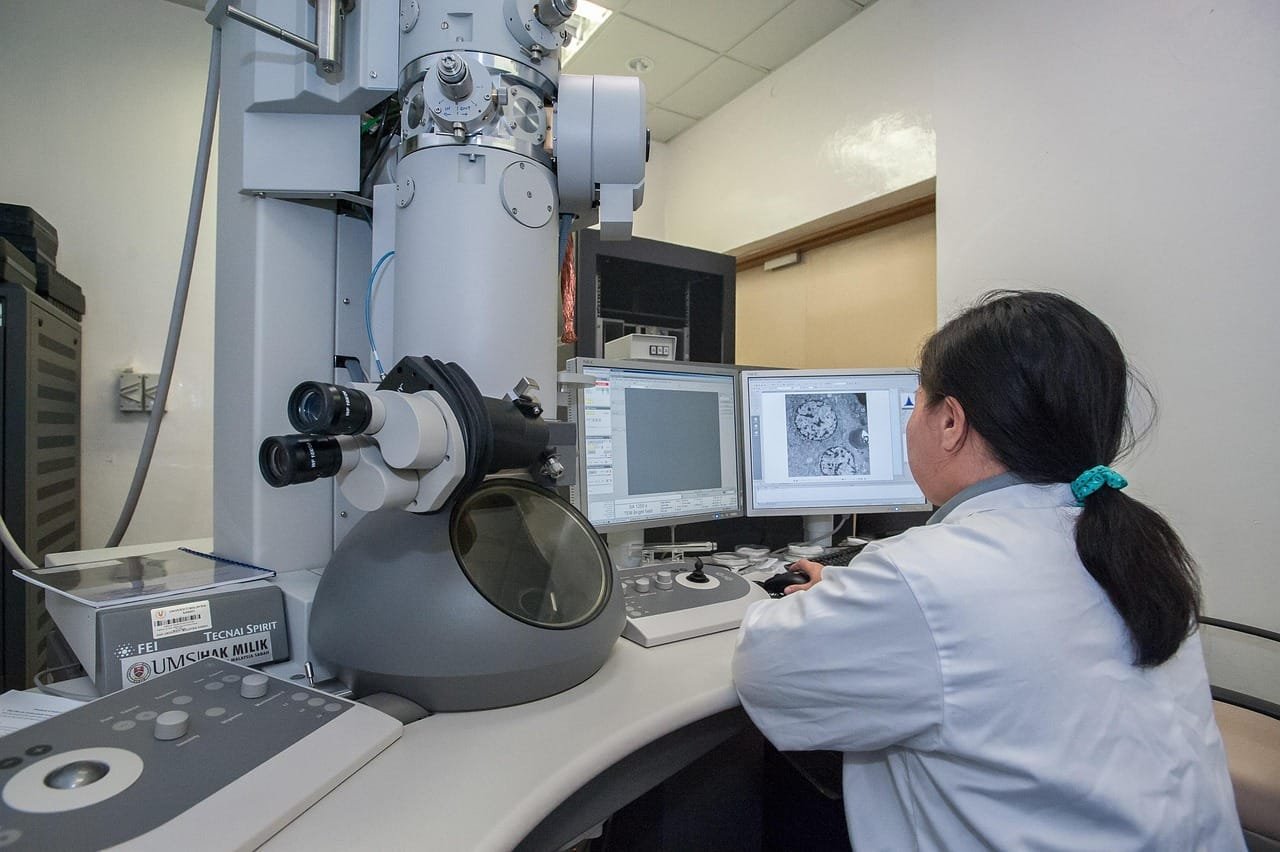

- Image credit: Photo by kennethr on Pixabay (Source • License)

- Disclosure: This post was generated by Claude (Anthropic). The original authors did not write or review this post.

Get the weekly research newsletter

Stay current with peer-reviewed research without reading academic papers — one filtered digest, every Friday.