AI Summary of Peer-Reviewed Research

This page presents an AI-generated summary of a published research paper. The original authors did not write or review this article. See full disclosure ↓

Publication Signals show what we were able to verify about where this research was published.MODERATECore publication signals for this source were verified. Publication Signals reflect the source’s verifiable credentials, not the quality of the research.

- ✔ Peer-reviewed source

- ✔ No retraction or integrity flags

Overview

Machine learning models integrated into neuroprosthetic devices such as visual and cortical prostheses generate electrical stimulation patterns. While offering potential for precise and personalized neural control, these systems introduce uncharacterized safety risks when model outputs directly interface with neural tissue. This work addresses the gap in systematic safety assessment by applying coverage-guided fuzzing, an automated software testing methodology, to detect and characterize unsafe stimulation patterns in ML-driven neurostimulation systems.

Methods and approach

The approach adapts coverage-guided fuzzing from software engineering to neural stimulation safety testing. The framework treats stimulus encoders as black boxes and performs stress testing through systematic perturbation of model inputs while monitoring whether resulting stimulation patterns violate established biophysical constraints on charge density, instantaneous current, and electrode co-activation. Coverage metrics quantify how thoroughly test cases span the output space and the diversity of violation types encountered. The method was applied to deep stimulus encoders designed for retinal and cortical stimulation.

Key Findings

Violation-focused fuzzing systematically identified diverse stimulation regimes exceeding established safety thresholds across both retinal and cortical encoder architectures. Two violation-output coverage metrics demonstrated superior performance in identifying the highest number and diversity of unsafe outputs, enabling quantitative comparison across different model architectures and training strategies. The analysis revealed systematic patterns of safety violations that would not emerge through conventional evaluation protocols.

Implications

This work establishes a reproducible, empirical framework for safety assessment in ML-driven neural interfaces. By converting safety from an implicit training objective into a directly measurable property of deployed models, violation-focused fuzzing enables evidence-based benchmarking and standardization of safety evaluation. The approach provides a foundation for regulatory frameworks and ethical assurance protocols in next-generation neuroprosthetic systems where ML-generated stimulation patterns directly contact neural tissue.

Disclosure

- Research title: Fuzzing the brain: automated stress testing for the safety of ML-driven neurostimulation

- Authors: Mara Downing, Matthew Peng, Jacob Granley, Michael Beyeler, Tevfik Bultan

- Publication date: 2026-02-23

- DOI: https://doi.org/10.1088/1741-2552/ae4927

- OpenAlex record: View

- PDF: Download

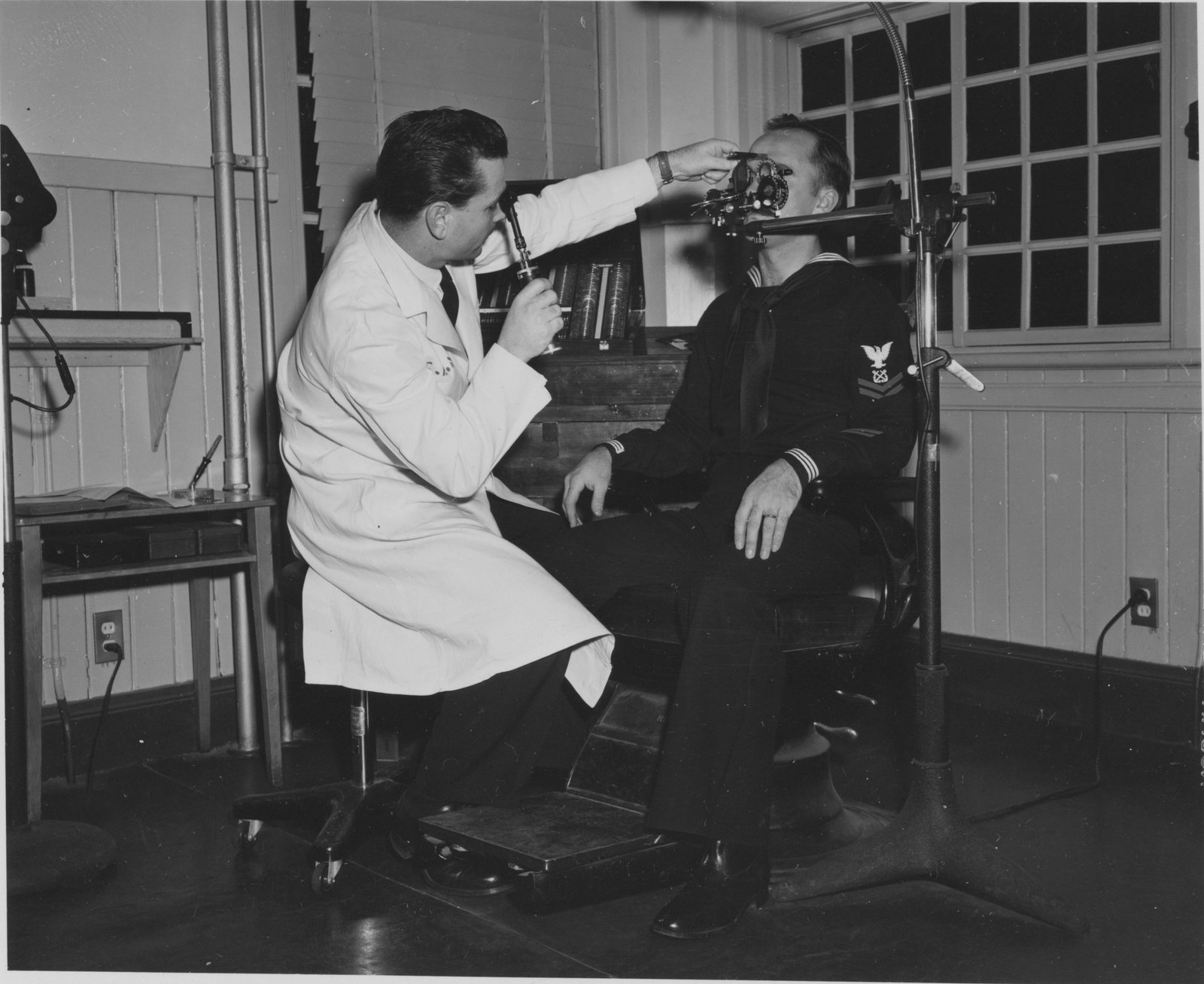

- Image credit: Photo by Navy Medicine on Unsplash (Source • License)

- Disclosure: This post was generated by Claude (Anthropic). The original authors did not write or review this post.

Get the weekly research newsletter

Stay current with peer-reviewed research without reading academic papers — one filtered digest, every Friday.