Concept: Parallel Computing and Optimization Techniques

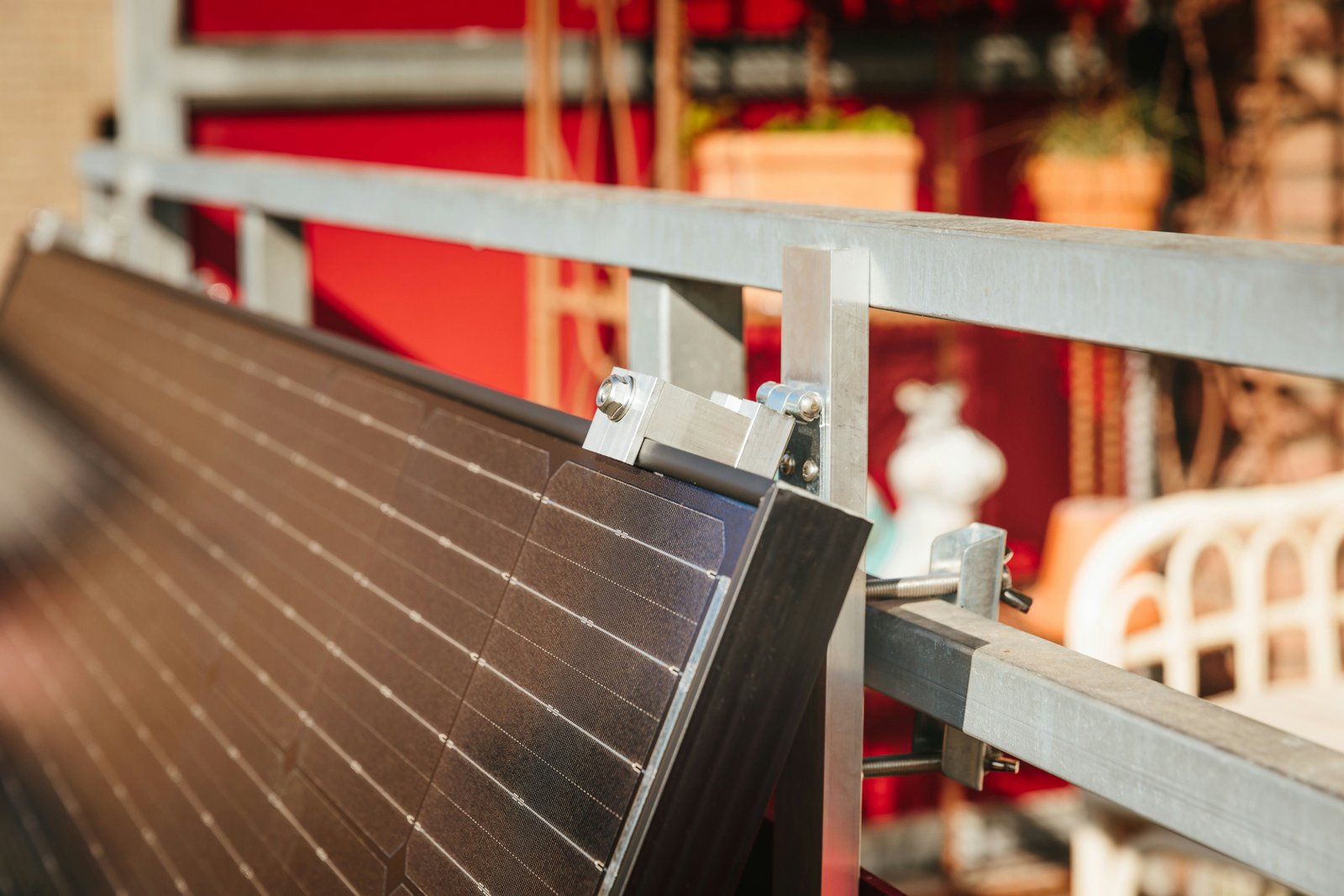

Adaptive CPU frequency scaling for energy-efficient and sustainable edge computing under renewable energy uncertainty

Optimizing CPU performance on renewable-powered edge servers using machine learning

Thoth: Uncovering Data-Dependent Memory Access Patterns via Annotation-Directed Load Sampling

Hardware prefetcher for irregular memory access in sparse data structures

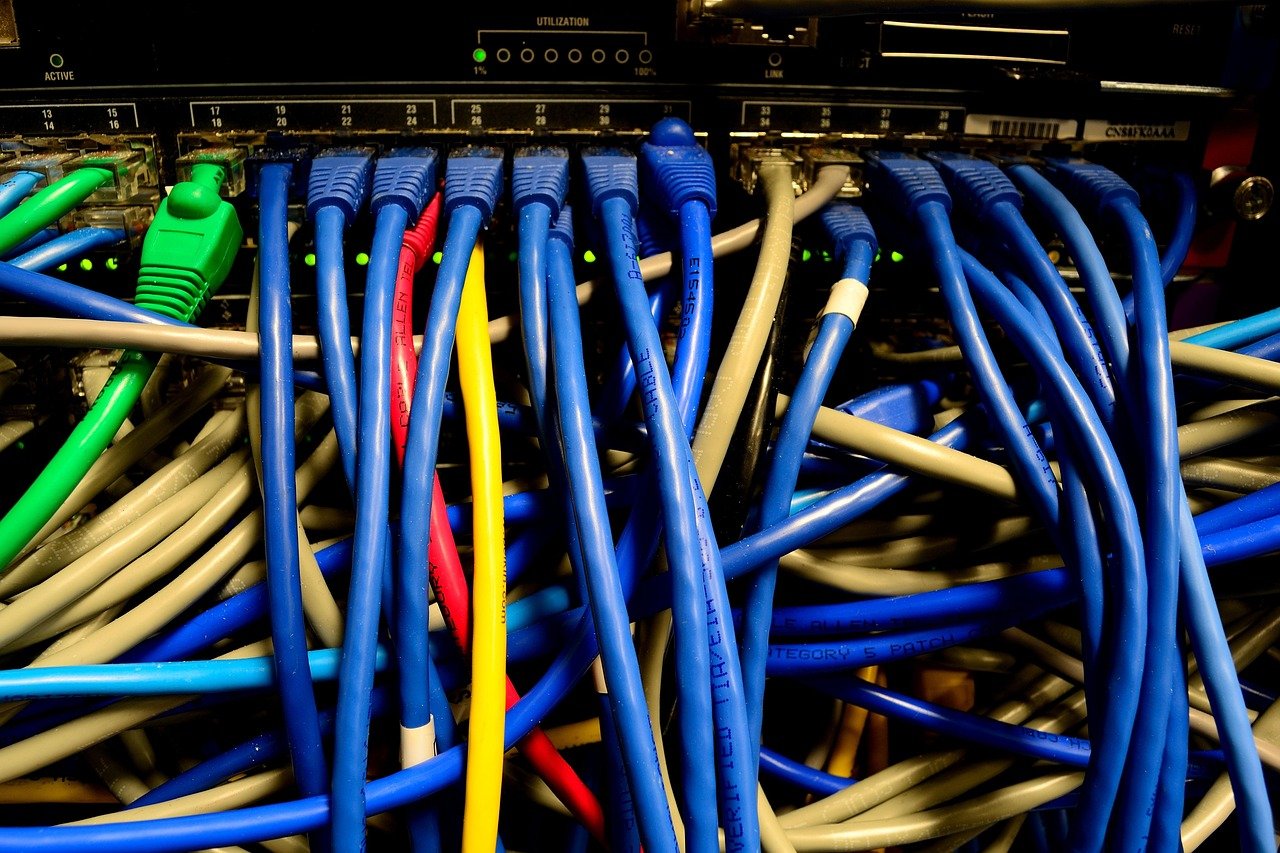

CXL-SpecKV: A Disaggregated FPGA Speculative KV-Cache for Datacenter LLM Serving

Offloading memory to remote accelerators improves LLM inference speed and reduces costs

It’s about Time: Temporal Abstractions for Asynchronous GPU Tensor Computations

Managing timing and coordination in asynchronous GPU tensor operations

SLAWS: Spatial Locality Analysis and Workload Orchestration for Sparse Matrix Multiplication

Improving efficiency of sparse matrix multiplication through adaptive locality analysis

PAT: Accelerating LLM Decoding via P refix- A ware A t tention with Resource Efficient Multi-Tile Kernel

Accelerating language model inference by reusing shared prompt cache across concurrent requests

Performance and energy consumption optimization of ternary optical computers based on the M/G/1 queuing model

Energy-efficient scheduling for optical computers using queuing theory

Dynamic Latency-Throughput Balancing in Distributed Large Model Inference with Interleaved Parallelism

Dynamically balancing speed and throughput for serving large AI models across multiple GPUs

WaSC: Hardening WebAssembly Sandboxes via System Interface Decoupling

Improving WebAssembly security through isolated system interface management

Integrating Quantum Software Tools with(in) MLIR

Using MLIR to create unified quantum software toolchains